This blog is not called Variable Variability for nothing. Variability is the most fascinating aspect of the climate system. Like a fractal you can zoom in and out of a temperature signal and keep on finding interesting patterns. The same goes for wind, humidity, precipitation and clouds. This beauty was one of the reasons why I changed from physics to the atmospheric sciences, not being aware at the time that also physicists had started studying complexity.

There is variability on all spatial scales, from clusters of cloud droplets to showers, fronts and depressions. There is variability on all temporal scales. With a fast thermometer you can see temperature fluctuations within a second and the effect of clouds passing by. Temperature has a daily cycle, day to day fluctuations, seasonal fluctuations and year to year fluctuations and so on.

Also the fluctuations fluctuate. Cumulus fields may contain young growing clouds with a lot of variability, older smoother collapsing clouds and a smooth haze in between. Temperature fluctuations are different during the night when the atmosphere is stable, after sun rise when the sun heats the atmosphere from below and the summer afternoon when thermals develop and become larger and larger. The precipitation can come down as a shower or as drizzle.

This makes measuring the atmosphere very challenging. If your instrument is good at measuring details, such as a temperature or cloud water probe on an aircraft, you will have to move it to get a larger spatial overview. The measurement will have to be fast because the atmosphere is changing continually. You can also select an instrument that measures large volumes or areas, such as a satellite, but then you miss out on much of the detail. A satellite looking down on a mountain may measure the brightness of some mixture of the white snow-capped mountains, dark rocks, forests, lush green valleys with agriculture and rushing brooks.

The same problem happens when you model the atmosphere. A typical global atmospheric oceanic climate model has a resolution of about 50 km. Those beautiful snow-capped mountains outside are smoothed to fit into the model and may have no snow any more. If you want to study how mountain glaciers and snow cover feed the rivers you can thus not use the simulation of such a global climate model directly. You need a method to generate a high resolution field from the low resolution climate model fields. This is called downscaling, a beautiful topic for fans of variability.

Deterministic and stochastic downscaling

For the above mountain snow problem, a simple downscaling method would take a high-resolution height dataset of the mountain and make the higher parts colder and the lower parts warmer. How much exactly, you can estimate from a large number of temperature measurements with weather balloons. However, it is not always colder at the top. On cloud-free nights, the surface rapidly cools and in turn cools the air above. This cold air flows down the mountain and fills the valleys with cold air. Thus the next step is to make such a downscaling method weather dependent.Such direct relationships between height and temperature are not always enough. This is best seen for precipitation. When the climate model computes that it will rain 1 mm per hour, it makes a huge difference whether this is drizzle everywhere or a shower in a small part of the 50 times 50 km box. The drizzle will be intercepted by the trees and a large part will evaporate quickly again. The drizzle that lands on the ground is taken up and can feed the vegetation. Only a small part of the heavy shower will be intercepted by trees, most of it will land on the ground, which can only absorb a small part fast enough and the rest runs over the land towards brooks and rivers. Much of the vegetation in this box did not get any water and the rivers swell much faster.

In the precipitation example, it is not enough to give certain regions more and others less precipitation, the downscaling needs to add random variability. How much variability needs to be added depends on the weather. On a dreary winters day the rain will be quite uniform, while on a sultry summer evening the rain more likely comes down as a strong shower.

Genetic Programming

There are many downscaling methods. This is because the aims of the downscaling depend on the application. Sometimes making accurate predictions is important; sometimes it is important to get the long-term statistics right; sometimes the bias in the mean is important; sometimes the extremes. For some applications it is enough to have data that is locally realistic, sometimes also the spatial patterns are important. Even if the aim is the same, downscaling precipitation is very different in the moderate European climate than it is in the tropical simmering pot.With all these different aims and climates, it is a lot of work to develop and test downscaling methods. We hope that we can automate a large part of this work using machine learning: Ideally we only set the aims and the computer develops the downscaling method.

We do this with a method called "Genetic Programming", which uses a computational approach that is inspired by the evolution of species (Poli and colleagues, 2016). Every downscaling rule is a small computer program represented by a tree structure.

The main difference from most other optimization approaches is that GP uses a population. Every downscaling rule is a member of this population. The best members of the population have the highest chance to reproduce. When they cross-breed, two branches of the tree are exchanged. When they mutate, an old branch is substituted by a new random branch. It is a cartoonish version of evolution, but it works.

We have multiple aims, we would like the solution to be accurate, we would like the variability to be realistic and we would like the downscaling rule to be small. You can try to combine all these aims into one number and then optimize that number. This is not easy because the aims can conflict.

1. A more accurate solution is often a larger solution.

2. Typically only a part of the small-scale variability can be predicted. A method that only adds this predictable part of the variability, would add too little variability. If you would add noise to such a solution, its accuracy goes down again.

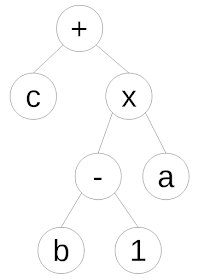

Instead of combining all aims into one number we have used the so-called “Pareto approach”. What a Pareto optimal solution is is best explained visually with two aims, see the graphic below. The square boxes are the Pareto optimal solutions. The dots are not Pareto optimal because there are solutions that are better for both aims. The solutions that are not optimal are not excluded: We work with two populations: a population of Pareto optimal solutions and a population of non-optimal solutions. The non-optimal solutions are naturally less likely to reproduce.

Example of a Pareto optimization with two aims. The squares are the Pareto optimal solutions, the circles the non-optimal solutions. Figure after Zitzler and Thiele (1999).

Coupling atmospheric and surface models

We have the impression that this Pareto approach has made it possible to solve a quite complicated problem. Our problem was to downscale the fields near the surface of an atmospheric model before they are passed to a model for the surface (Zerenner and colleagues, 2016; Schomburg and colleagues, 2010). These were, for instance, fields of temperature, wind speed.The atmospheric model we used is the weather prediction model of the German weather service. It has a horizontal resolution of 2.8 km and computes the state of the atmosphere every few seconds. We run the surface model TERRA at 400 m resolution. Below every atmospheric column of 2.8x2.8 km, there are 7x7 surface pixels.

The spatial variability of the land surface can be huge; there can be large differences in height, vegetation, soil type and humidity. It is also easier to run a surface model at a higher spatial resolution because it does not need to be computed so often, the variations in time are smaller.

To be able to make downscaling rules, we needed to know how much variability the 400x400 m atmospheric fields should have. We study this using a so-called training dataset, which was made by making atmospheric model runs with 400 m resolution for a smaller than usual area for a number of days. This would be too much computer power for a daily weather prediction for all of Germany, but a few days on a smaller region are okay. An additional number of 400 m model runs was made to be able to validate how well the downscaling rules work on an independent dataset.

The figure below shows an example for temperature during the day. The panel to the left shows the coarse temperature field after smoothing it with a spline, which preserves the coarse scale mean. The panel in the middle shows the temperature field after downscaling with an example downscaling rule. This can be compared to the 400 m atmospheric field the coarse field was originally computed from on the right. During the day, the downscaling of temperature works very well.

The figure below is the temperature field at night during a clear sky night. This is a difficult case. On cloud-free nights the air close to the ground cools and gathers in the valleys. These flows are quite close to the ground, but a good rule was to take the temperature gradient in the lower model layers and multiply it with the height anomalies (height differences from spline-smoothed coarse field).

Having a population of Pareto optimal solutions is one advantage of our approach. There is normally a trade of between the size of the solution and its performance and having multiple solutions means that you can study this and then chose a reasonable compromise.

Contrary to working with artificial neural networks as machine learning method, the GP solution is a piece of code, which you can understand. You can thus select a solution that makes sense physically and thus more likely works as well in situation that are not in the training dataset. You can study the solutions that seem strange and try to understand why they work and gain insight into your problem.

This statistical downscaling as an interface between two physical models is a beautiful synergy of statistics and physics. Physics and statistics are often presented at antagonists, but they actually strength each other. Physics should inform your statistical analysis and the above is an example where statistics makes a physical model more realistic (not performing a downscaling is also a statistical assumption, just less visible and less physical).

I would even argue that the most interesting current research in the atmospheric sciences merges statistics and physics: ensemble weather prediction and decadal climate prediction, bias corrections of such ensembles, model output statistics, climate model emulators, particle assimilation methods, downscaling global climate models using regional climate models and statistical downscaling, statistically selecting representative weather conditions for downscaling with regional climate models and multivariate interpolation. My work on adaptive parameterisation combining the strengths of more statistical parameterisations with more physical parameterisations is also an example.

Tweet

Related reading

On cloud structureAn idea to combat bloat in genetic programming

References

Poli, R., W.B. Langdon and N. F. McPhee, 2016: A field guide to genetic programming. Published via Lulu.com (With contributions by J. R. Koza).Schomburg, A., V.K.C. Venema, R. Lindau, F. Ament and C. Simmer, 2010: A downscaling scheme for atmospheric variables to drive soil-vegetation-atmosphere transfer models. Tellus B, doi: 10.1111/j.1600-0889.2010.00466.x, 62, no. 4, pp. 242-258.

Zerenner, Tanja, Victor Venema, Petra Friederichs and Clemens Simmer, 2016: Downscaling near-surface atmospheric fields with multi-objective Genetic Programming. Environmental Modelling & Software, in press.

Zitzler, Eckart and Lothar Thiele, 1999: Multiobjective evolutionary algorithms: a comparative case study and the strength Pareto approach. IEEE transactions on Evolutionary Computation 3.4, pp. 257-271, 10.1109/4235.797969.

* Sierpinski fractal at the top was generated by Nol Aders and is used under a GNU Free Documentation License.

* Photo of mountain with clouds all around it (Cloud shroud) by Zoltán Vörös and is used under a Creative Commons Attribution 2.0 Generic (CC BY 2.0) license.

Hey, could you add a follow by email button? If you'd like to :-)

ReplyDeleteNick

Below the comments is a tag cloud. Below it on the left is the email button. It seems to be somehow hard to find, the number of email subscribers is low relative to the number of readers.

ReplyDelete